Biography

I am an ML System Researcher intertested in building efficient training and inference systems. I graduated with a PhD degree from the National University of Singapore in 2026, where I was fortunate to be advised by Prof. Jialin Li and Wei Lin from Alibaba. I have previously worked at Sea AI Lab, Google XLA, Alibaba Platform for AI (PAI) team, and Apple Turi.

During my undergrad, I had the privilege (and fun) of spending four years with the NTU HPC club, where we won the Overall Championship at SC'17 and set the LINPACK World Record. I’ve hosted a MLSys seminar in Singapore.

Outside of my academic pursuits, I enjoy cooking (menu), hiking, and ham radio. My Erdős number is 5.

Download my resumé .

- Large-Scale Machine Learning

- Automatic Distributed Training

- Distributed Systems

Ph.D. Candidate, Computer Science, 2021 - 2026

National University of Singapore

BEng in Computer Science, 2015 - 2019

Nanyang Technological University

Visiting Student, Fall 2016

New York University

Publications

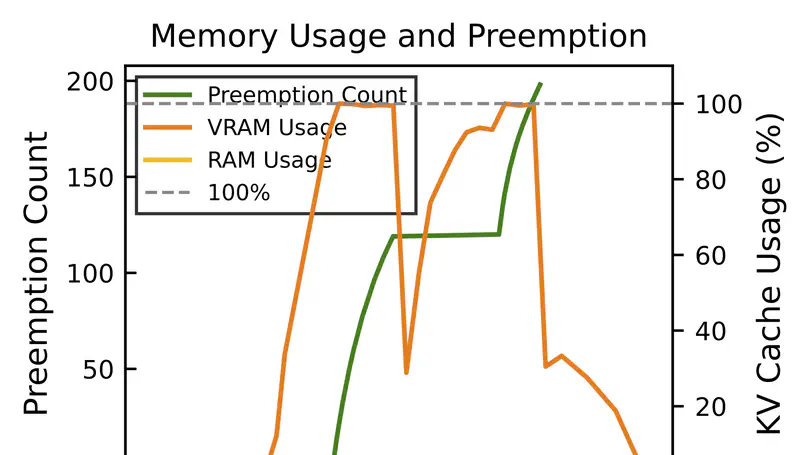

We present a predictive KV cache offloading mechainism that support ultra-long decoding phase in reasoning and agentic workloads.

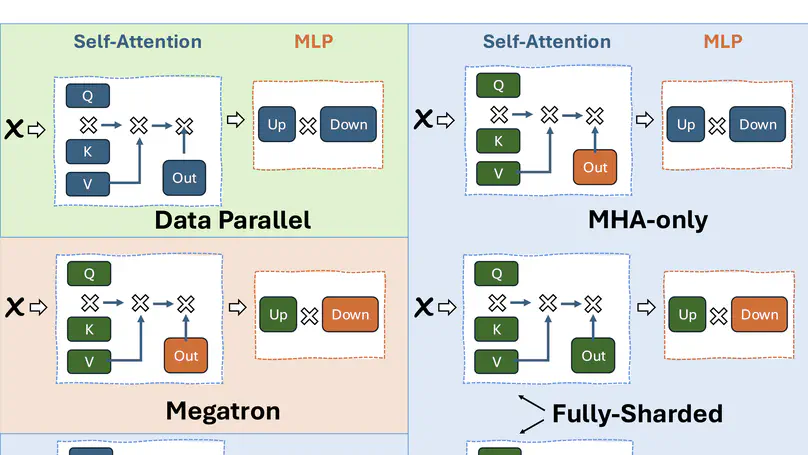

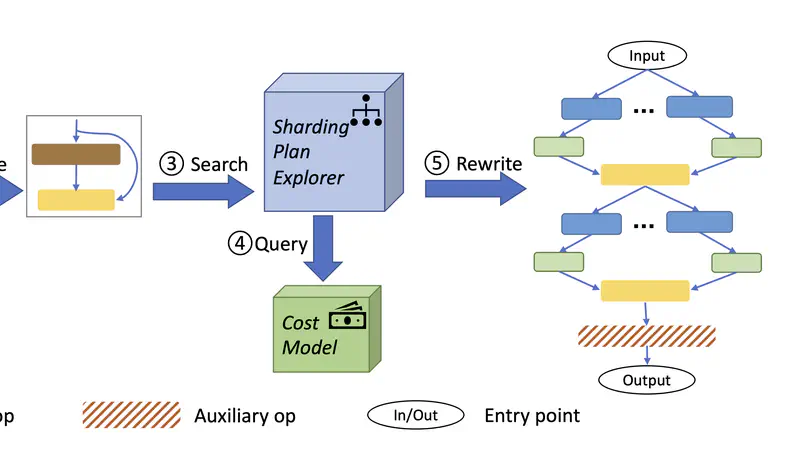

We present a framework that drastically speeds up the process of deriving the tensor parallel schedule for large neural networks by 160x.

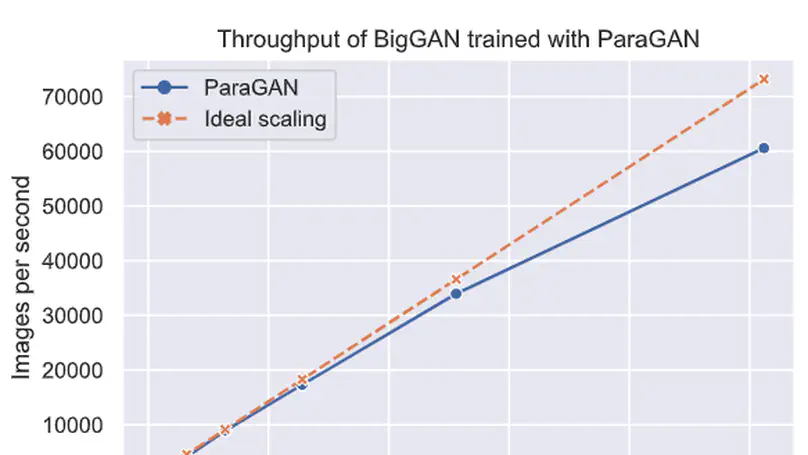

We present ParaGAN, a cloud-training framework for GAN, which demonstrates near optimal scaling performance over thousands of acclerators with system & training co-design.

We present a framework that drastically speeds up the process of deriving the tensor parallel schedule for large neural networks.

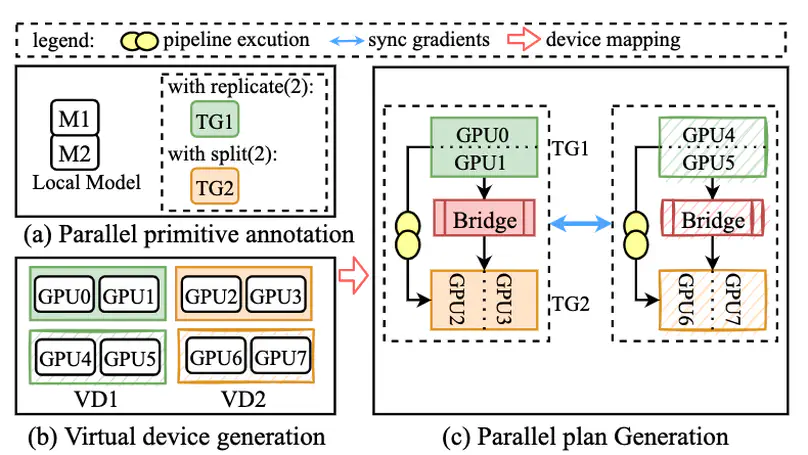

Whale is a highly scalable and efficient distributed training framework for deep neural networks, introducing a hardware-aware parallel strategy and user-enabled model annotations for optimising large-scale model training, demonstrating its prowess by successfully training a multimodal model with over ten trillion parameters on a 512-GPU setup.